TL;DR

- In the Agentic AI era, defensibility shifts from foundation models to vertical AI moats: proprietary data pipelines, orchestration layers, domain-specific tuning, and audit-grade control planes.

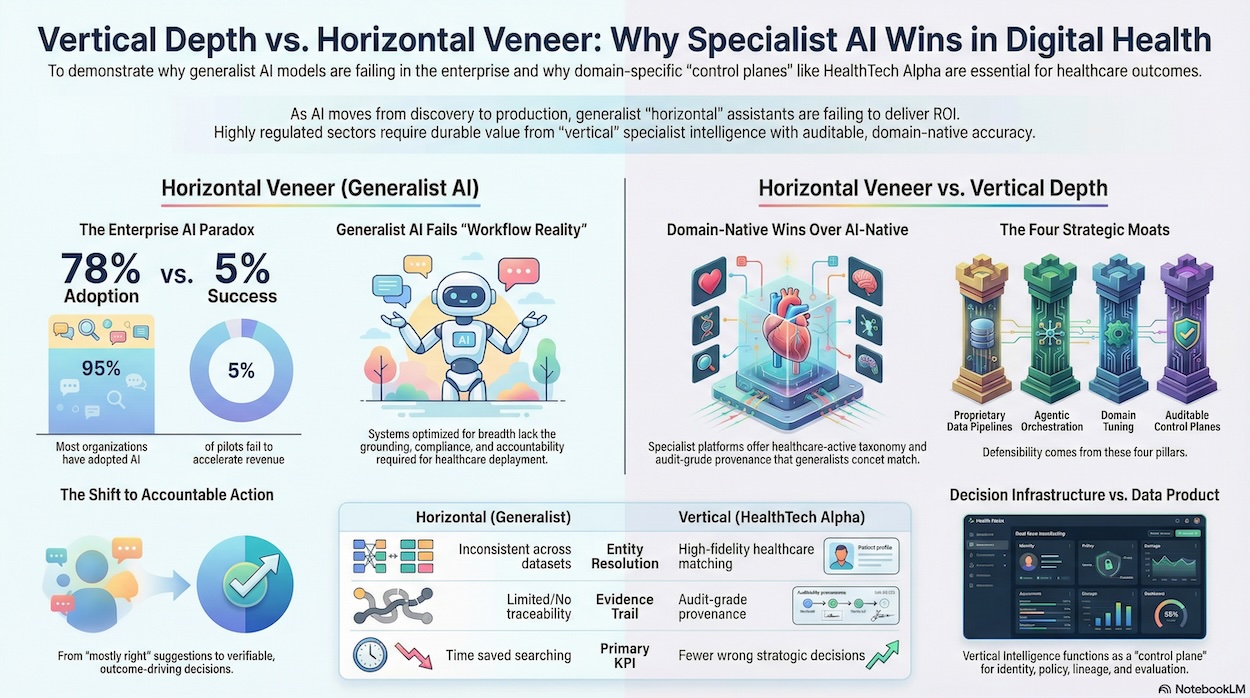

- ~78% of enterprises have adopted AI, yet ~95% of pilots fail to accelerate revenue — proving the bottleneck is not model capability, but domain grounding, workflow integration, and accountability.

- Healthcare intensifies this dynamic: regulatory scrutiny, clinical evidence standards, and reimbursement complexity mean “mostly right” AI is operationally unacceptable.

- Digital Health Intelligence platforms act as decision infrastructure, providing lineage, entity resolution, taxonomy coherence, and governance required for enterprise deployment.

- Generalists enable breadth, but specialist Digital Health Intelligence wins because enterprises now optimise for fewer wrong decisions — not faster generic answers.

If 2024 was the year enterprises discovered generative AI, 2025–2026 is the period they’re learning—often painfully—what it takes to make it work in production. The early “horizontal” story was seductive: one generalist assistant, a universal interface, and a promise that knowledge work could be automated at scale.

But the data—and the architecture reality—are now pointing in a different direction: vertical depth will matter more than horizontal veneer. Even PitchBook’s own framing of the agentic AI transition in a recent op-ed converges on this conclusion: the durable value will not come from the models themselves (which are rapidly commoditising), but from the domain-specific moats that make automation reliable, auditable, and outcome-driving.

This is exactly where Digital Health Intelligence is heading. And it is why HealthTech Alpha is structurally aligned with the future, while generalist “good enough” data products are set up to disappoint the very stakeholders who need precision most: pharma, payers, medtech, providers, and investors.

The enterprise AI paradox: adoption is high, outcomes are not

The op-ed highlights the paradox defining enterprise AI today: AI adoption is widespread (78% of organisations), yet meaningful business outcomes remain elusive, with 95% of pilots cited as failing to accelerate revenue.

This gap is not a “model quality” problem. It’s a workflow reality problem:

- AI that isn’t grounded in the right data fails quietly.

- AI that can’t explain itself won’t be trusted.

- AI that isn’t compliant won’t be deployed.

- AI that can’t write back into systems of record won’t deliver outcomes.

The uncomfortable truth: generalist systems are optimised for breadth, not accountability—and the enterprise is now demanding accountability.

The market is bifurcating — but the real split is deeper: generalist vs specialist

The op-ed frames the new dichotomy as AI-embedded incumbents (legacy platforms retrofitted with copilots/agents) versus AI-native challengers (new platforms built around autonomous workflows).

That framework is right—but incomplete for regulated, domain-complex markets like healthcare.

Because in Digital Health, the most consequential divide is:

- Horizontal AI / generalist intelligence: broad coverage across industries, shallow domain semantics, inconsistent entity resolution, and limited evidence traceability.

- Vertical AI / specialist intelligence: healthcare-native taxonomy, high-fidelity entity matching, evidence linkage, regulatory awareness, and audit-grade provenance.

In other words: the winners won’t just be “AI-native.” They’ll be “domain-native.”

Moats in the agentic AI era are not UI — they are data rights, orchestration, tuning, and control

The op-ed is explicit that investors shouldn’t anchor defensibility on models; the defensible moats are emerging elsewhere—especially:

- Proprietary data pipelines + rights that create a feedback loop

- Agentic orchestration (planning, tool use, rollback, safety policies, evaluation harnesses)

- Domain-specific tuning that produces verifiable accuracy and reduces human intervention

- Auditable control planes that satisfy governance, compliance, lineage, and observability

Those four moats map cleanly onto what decision-grade Digital Health intelligence must be in 2026+.

And it’s exactly why a specialist platform like HealthTech Alpha is not simply “another database”—it is the kind of data plane that agentic systems require to create real-world outcomes in healthcare.

Why healthcare punishes horizontality

Most enterprise functions can tolerate “mostly right” answers. Healthcare generally cannot.

In the op-ed’s own outlook, they note that in regulated verticals, generalized assistants rarely suffice—explicitly calling out that vertical constraints in areas like law and healthcare require domain-specific tuning and compliance.

Digital Health intelligence sits at the intersection of:

- clinical claims and evidence,

- regulatory and reimbursement realities,

- provider workflows,

- enterprise procurement,

- data privacy constraints,

- and rapidly shifting market structure (M&A, partnerships, productization).

A generalist platform can cover “companies.” But Digital Health buyers don’t just need “companies.”

They need answers to questions like:

- Which solutions have credible clinical evidence signals vs marketing claims?

- Which partnerships are strategically meaningful vs PR noise?

- Which ventures map to specific care pathways, specialities, and budget owners?

- What is actually scaling inside pharma, payers, providers—and why?

Those are semantic questions, not keyword queries. And semantics are exactly what vertical platforms encode.

HealthTech Alpha as a “control plane for Digital Health intelligence”

One of the op-ed’s most important points is architectural: in an AI-native world, the control plane (identity, policy, lineage, evaluation, observability) becomes the moat.

Translate that into Digital Health:

A platform that wants to power AI-driven decision-making in healthcare must provide:

- Lineage: Where did this claim come from? What’s the source? What’s the evidence trail?

- Entity resolution: The same company is not spelt differently across five datasets.

- Taxonomy coherence: “Digital therapeutics,” “remote monitoring,” and “AI imaging” aren’t interchangeable buckets—they drive different buying, risk, and outcomes.

- Governance: What can the model access? What can it export? What can it recommend?

- Auditability: If this insight influences investment, partnership, or strategy, can it be defended?

That’s the difference between a “data product” and a decision infrastructure layer.

This is where HealthTech Alpha’s positioning is strongest: it is not competing to be the most general dataset. It is competing to be the trusted intelligence layer for one of the most complex markets in the world.

The real ROI in 2026 is not faster answers — it’s fewer wrong decisions

The op-ed notes the market is moving from “suggestion to accountable action,” and that enterprises will reward reliability, auditability, and improving unit economics (often measured as cost per action, fewer fallbacks, fewer human interventions).

In Digital Health, the analogous KPI is not “time saved searching.”

It’s:

- fewer wasted diligence cycles,

- fewer dead-end vendor evaluations,

- fewer partnerships that don’t scale,

- fewer strategy decks built on faulty assumptions,

- fewer missed signals of what is actually working in care delivery and commercialisation.

Generalist platforms help you start research.

Specialist platforms help you finish decisions.

Our prediction: specialists become the substrate that horizontals must integrate

Here is the directional bet that follows logically from the op-ed’s thesis:

- Horizontal copilots will be everywhere.

- But they will depend on vertical data planes to be trusted.

- The most valuable vertical data planes will be those that already have:

- proprietary pipelines,

- domain semantics,

- feedback loops,

- and governance surfaces.

So the “vertical vs horizontal” debate isn’t actually a feature debate.

It’s an infrastructure debate.

And the infrastructure winners are the platforms that can say, credibly:

“You can build agents on top of us—and those agents will be grounded, governed, and auditable inside your enterprise workflows.”

That is what “Digital Health intelligence” becomes in the agentic AI era.

Bottom line

The op-ed’s argument about AI moats can be summarised as: models commoditise; domain control planes compound.

Healthcare magnifies this dynamic. The cost of being wrong is too high, and the burden of proof is too strict. That’s why the future of Digital Health intelligence is vertical—and why HealthTech Alpha is structurally positioned to be the system-of-truth data plane that agentic workflows will require.

Generalists will remain useful for breadth.

But specialists will own outcomes.

And in 2026, outcomes are the only metric that matters.